The Energy Lie: Why Neuromorphic Chips Are Quietly Killing the Silicon Empire (And Who Profits)

The breakthrough in neuromorphic computing isn't about faster math; it's about dismantling the massive energy footprint of modern AI. Discover the hidden cost of computation.

Key Takeaways

- •Neuromorphic computing fundamentally solves the energy crisis facing large-scale AI by mimicking biological neural efficiency.

- •The technology shifts computational power away from centralized data centers to localized, low-power edge devices.

- •The primary beneficiaries will be defense and specialized industrial sectors initially, disrupting the dominance of current cloud giants.

- •Expect a five-year horizon for widespread 'hybrid' chips integrating neuromorphic cores into mainstream hardware.

The Hook: Is Your Supercomputer a Climate Liability?

We celebrate every leap in Artificial Intelligence—every new LLM, every breakthrough in protein folding. But behind the curtain, the engine room of modern AI is a roaring furnace, demanding exponentially more energy than the last generation. This unsustainable thirst for power has been the industry’s dirty secret. Now, a quiet revolution is underway, centered on **neuromorphic computing**—chips designed not like Von Neumann machines, but like the human brain itself. This isn't just an incremental speed boost; it’s a fundamental, potentially civilization-altering paradigm shift in how we process information.

The Meat: Brains Over Binary

The core innovation, as reported by ZME Science, lies in mimicking biological neural networks. Traditional silicon chips rely on the separation of memory and processing, leading to constant, energy-intensive data shuffling—the so-called 'von Neumann bottleneck.' Neuromorphic architectures, however, integrate these functions. They process information using 'spikes' and analog signals, far closer to how neurons fire than binary code ever could. The result? Calculations that previously required massive data centers can now potentially be run on a fraction of the power. This is the key to unlocking truly ubiquitous, always-on AI.

Why is this massive energy reduction so critical? Because the growth trajectory of AI computation is terrifying. If current trends continue, the energy demands of AI could rival entire nations. **Neuromorphic chips** offer the only credible escape route from this looming infrastructural crisis. This isn't just about saving electricity; it's about maintaining Moore's Law viability in the age of massive models.

The Unspoken Truth: Who Really Wins (And Who Loses)?

The immediate winners are the defense contractors and specialized industrial players who can afford the early R&D. But the real tectonic shift is geopolitical. Nations currently leading in AI (the US and China) are simultaneously grappling with massive energy grid constraints. Whoever masters scalable, low-power **AI hardware** first gains an asymmetric advantage. Think real-time, localized battlefield analysis or instant climate modeling that doesn't require a dedicated power plant.

The losers? The legacy semiconductor giants whose entire business model rests on scaling up existing, energy-hungry architectures. They will fight tooth and nail to maintain the status quo, likely acquiring promising startups or lobbying for regulatory inertia. The real agenda here isn't just better chips; it's a re-centralization of computational power away from hyperscale cloud providers toward localized, edge-based processing. This is a massive threat to the current cloud oligopoly.

Where Do We Go From Here? The Prediction

Forget the immediate product releases. My prediction is that within five years, neuromorphic principles, even if not fully realized in pure analog form, will fundamentally reshape the instruction sets of mainstream GPUs and CPUs. We will see a 'hybridization' where specialized cores optimized for brain-like efficiency handle the constant, low-level inference tasks, leaving the massive, energy-guzzling architecture for training runs only. Furthermore, expect a massive push into 'in-memory computing' patents, turning the legal landscape around **computer chips** into the next great tech warzone, far surpassing the current patent battles over software algorithms. The hardware layer is the new frontier.

Key Takeaways: The TL;DR

- Neuromorphic chips mimic the brain to drastically cut AI energy consumption.

- This addresses the unsustainable energy demands threatening AI scaling.

- Legacy chipmakers face an existential threat from this architectural shift.

- Expect rapid hybridization of current chips with neuromorphic principles within five years.

Frequently Asked Questions

What is the main difference between neuromorphic chips and traditional CPUs?

Traditional CPUs use the Von Neumann architecture, separating memory and processing, which requires constant data shuffling and high energy. Neuromorphic chips integrate memory and processing, mimicking the brain's sparse, event-driven 'spiking' activity, leading to massive energy savings.

How does this technology affect current AI models like ChatGPT?

While current large language models (LLMs) are primarily trained on massive, traditional supercomputers, neuromorphic chips are better suited for the inference stage—running the model efficiently once trained. They will enable powerful AI applications to run locally on phones or sensors without constant cloud connection.

Who are the major players currently investing in neuromorphic hardware?

Major players include Intel (with Loihi), IBM, and numerous well-funded startups. However, significant investment is also coming from defense research agencies globally due to the strategic energy advantage.

Related News

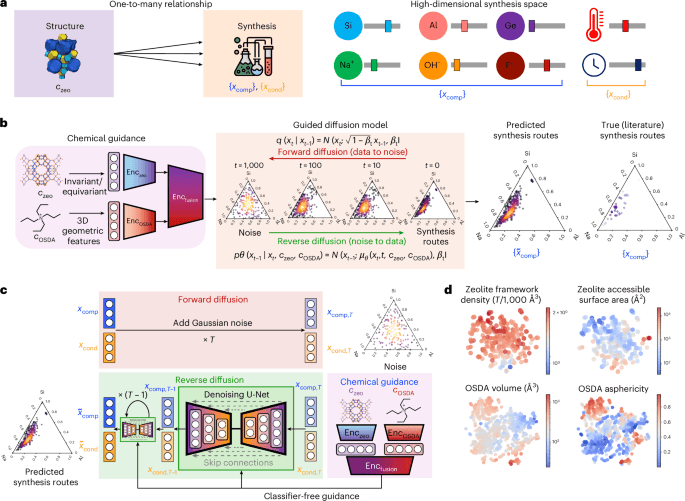

The AI Alchemy Revolution: Why DiffSyn Isn't Just New Science, It's a Threat to Traditional Chemistry Careers

Generative AI like DiffSyn is fundamentally reshaping materials science. Discover the unspoken winners and losers in this new era of chemical discovery.

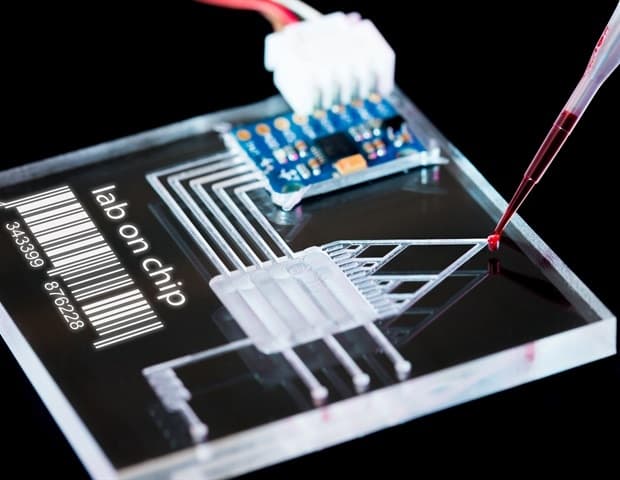

The Hidden Cost of Lab-Grown Organs: Why Simplified Microfluidics Will Bankrupt Traditional Biotech

Digital microfluidic technology is changing 3D cell culture, but the real story is the centralization of pharmaceutical power it enables.

The Hidden Cost of 'AI Saviors': Why Ravender Pal Singh's Math Background Exposes Silicon Valley's Shallow Hype Cycle

The journey from pure mathematics to AI innovation isn't just career progression; it's a warning about AI safety. Unpacking the real stakes.